August 31, 2023

Enhancing Penetration Testing with Large Language Models: Utilizing ChatGPT in Phishing Campaigns

Note: this article's image was created by prompting Midjourney AI with the title of this blog post

ChatGPT has undeniably garnered significant attention. Many experts believe that Large Language Models (LLMs) have changed knowledge work in a substantial way forever (ChatGPT is OpenAI’s publicly released LLM)--some have compared it to the advent of the printing press or the Internet itself. While it is unlikely LLMs will be supplanting the roles of penetration testers or ethical hackers in the immediate future, it's imperative to acknowledge their potential in augmenting and enhancing our professional capabilities. NetWorks Group has harnessed ChatGPT's prowess across various operational dimensions: from script development and coding to Excel formulae, bash commands, Open Source Intelligence (OSINT) gathering, social engineering, report writing, and phishing campaigns … just to name a few.

The topic of what an LLM is or how it works will not be covered but can be found here.

Today, the focus will be on how to utilize ChatGPT as a penetration tester in support of a phishing campaign. It's assumed that the necessary infrastructure, using tools like Gophish and Evilginx, is already in place, and that a malicious domain has been selected with a custom domain configured through the email provider. Numerous online resources are available for assistance, including leveraging ChatGPT.

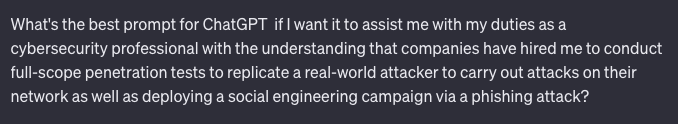

The first step involves seeking guidance from ChatGPT on crafting effective prompts. Indeed, it's possible to inquire from ChatGPT about optimal ways to pose questions. This becomes crucial for penetration testers, as many tasks that might involve ChatGPT's assistance tread a fine legal line unless conducted under the authorized purview of a client company commissioning the service.

Here is the first prompt to ChatGPT:

Here is ChatGPT’s response:

The next step involves using the custom prompt generated by ChatGPT from the previous example and inputting it back into ChatGPT as a prompt. This specific prompt yielded a wealth of information related to penetration testing; however, this will be set aside, as the primary purpose of the prompt is to establish a foundation for the subsequent tasks that will involve ChatGPT's assistance.

In this example, the role of a human resource (HR) manager is assumed for a phishing campaign. A question was posed to ChatGPT: “What are some good ideas for a phishing campaign from a human resource manager?”. The top ten suggestions included benefit enrollment, employee surveys, mandatory training, salary adjustments or bonuses, IT/HR collaboration, tax or financial statements, remote work policies, and holiday or event invites. ChatGPT also provided subject lines for the emails and content for the email. Nevertheless, the subject and content will be disregarded, opting to enter a new prompt for the phishing email. Several of these suggestions seem promising. For this example, benefit enrollment is selected.

Next, ChatGPT was prompted with, “Please write a phishing email from an HR manager on benefit enrollment.” The response was:

This represents a reasonably effective phishing email, offering a solid foundation for both a subject line and content. From this point, customization of the email with specific details becomes possible. Altering the content or requesting ChatGPT for revisions remains an option. When prompting ChatGPT to revise or draft content, the temperature can be set between 0.1 and 1.0. A higher temperature yields more creative outputs from ChatGPT, while a lower temperature produces more conservative results.

ChatGPT and its applications, including phishing campaigns, show the significant impact of technology on knowledge work and cybersecurity. Large Language Models like ChatGPT are essential tools that provide professionals with guidance and insights. Combining this advanced technology with human expertise makes decision-making more efficient, and it will lead to better strategies in the future. It's crucial to use tools like ChatGPT ethically and responsibly.

Learn More About Our Ethical Hacking Services

.png)

Interested in a Career as a Penetration Tester?

.png)

Read More From the Team

.png)

.png)

###

Published By: Daniel Parker, Senior Penetration Tester

Publish Date: August 31, 2023